With the release of vCloud Director 1.5.1 last night, the operating system for the vCloud Director Cell now supports Red Hat Enterprise Linux 5.7 (x86_64). If you are running your current cell with Red Hat Enterprise Linux 5.6, and you want to upgrade to the most recent release that is supported, here are the steps. Yet, you have to be careful not to upgrade to Red Hat Enterprise Linux 5.8, which as been release the 21st February 2012. RHEL 5.8 is not on the official supported list by VMware.

In the following screenshots we will use the yum update tool to make sure we upgrade to RHEL 5.7 only.

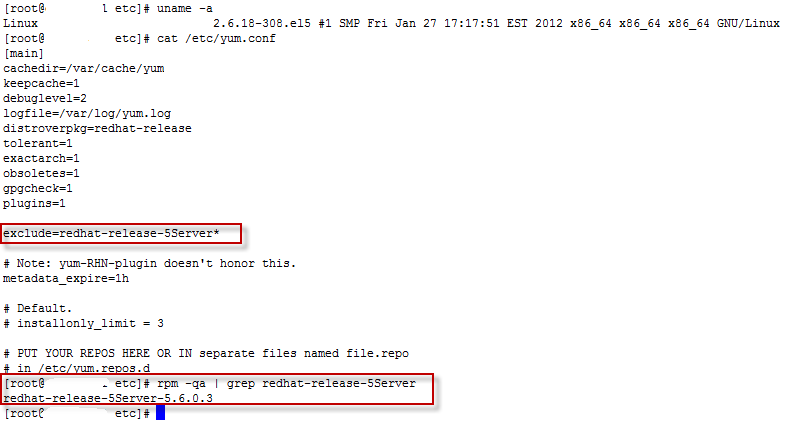

The first screenshot shows the current kernel 2.6.18-308.el5 for RHEL 5.6, and the configuration of the yum.conf file that has an explicit exclude=redhat-release-5Server* rule. We also see that we now have the redhat-release-5Server-5.6.0.3.

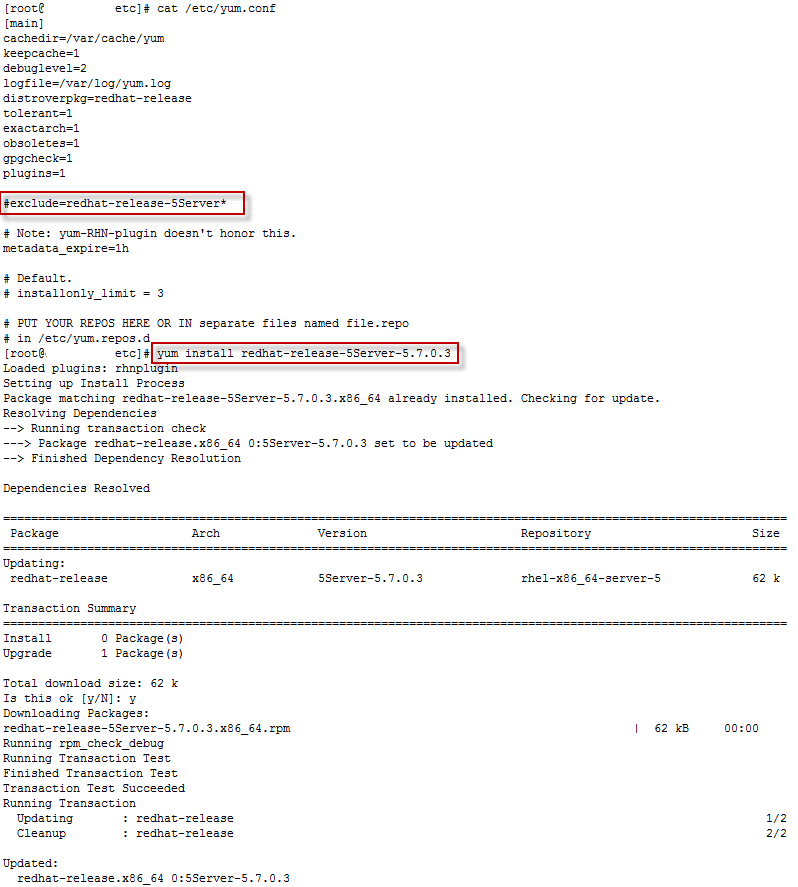

We will now modify the /etc/yum.conf so that we can download the redhat-release-5Server-5.7.0.3.x86_64.rpm file. We comment out the exclude file, and we install immediately the release file for RHEL 5.7

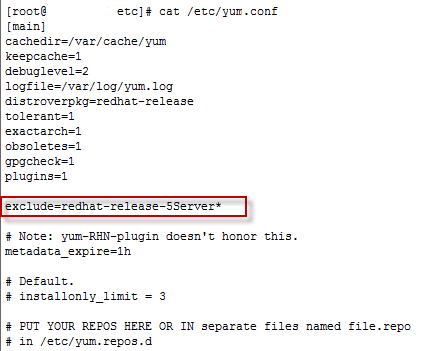

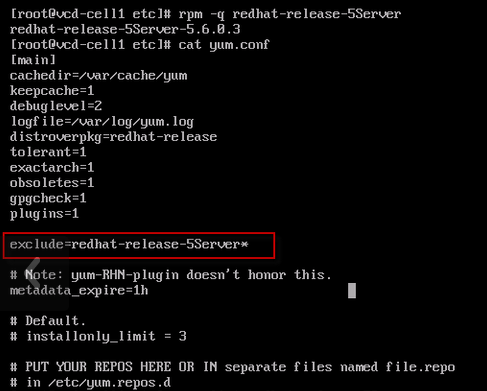

Now it’s important to immedialty renable the exclusion of the redhat-release-5Server, so that you will not by accident upgrade to RHEL 5.8

Now you can run the yum upgrade to your own pace, and be sure that you are staying on the supported release of Red Hat Enterprise Linux for the vCloud Director 1.5.1

Just got back from a long weekend, and I saw a nice news item waiting for me in my email box, the

Just got back from a long weekend, and I saw a nice news item waiting for me in my email box, the  VMware has released VMware Workstation 6.0 yesterday. It is the sixth generation of the Workstation virtualization product. This version brings enhancements on the virtual devices and connectivity for the virtual machines (USB 2.0 support, more network cards, multiple-display). Seemlessly run both 32bit environments and 64bit (x86-64) on the same host. Supports running virtual machines in the background with headless operations. Enhanced support for developpers.

VMware has released VMware Workstation 6.0 yesterday. It is the sixth generation of the Workstation virtualization product. This version brings enhancements on the virtual devices and connectivity for the virtual machines (USB 2.0 support, more network cards, multiple-display). Seemlessly run both 32bit environments and 64bit (x86-64) on the same host. Supports running virtual machines in the background with headless operations. Enhanced support for developpers.